Automating disk limits in vSphere

Following up on my last post on Limiting disk i/o in vSphere we will now see how to automate this.

First off we need some way to identify what limit a specific disk should have.

You can do this in multiple ways, you could use an external source, you can use Tags or as we've done, Storage Policies.

All our VMs and VMDKs have a Storage Policy to control the Storage it will be compliant with. We have named our policies so we can identify what kind of limit it should have. We can now use this to set the limit corresponding to the policy.

For setting the limits we will use PowerCLI as shown in the previous post and we will create a Powershell script for retrieving disks and policies and run the PowerCLI command for setting the correct limit.

Update: With the recent blog post from Duncan Epping we realized that the mClock scheduler actually does not normalize I/O size to 32 KB as I've described in a previous article. This means that we cannot control the MB/s throughput for disks as we've expected. It also means that setting limits through Storage Policies has the same affect as the below examples. If you use Storage Policies in your environment, I would recommend that you do, then you would control everything from there and not need to do the steps I've done below. Either way I will keep this post as an example of how to automate things through PowerCLI.

Working with limits using PowerCLI

First let's refresh how work with limts through PowerCLI.

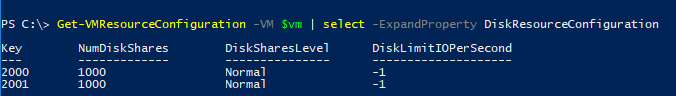

We are using the *-VMResourceConfiguration cmdlets, and limits are set on disks so we need to specify that we want to see the diskresourceonfiguration. In this example the VM has two disks so we will get the configuration for both.

Remember that the -1 setting corresponds with "Unlimited"

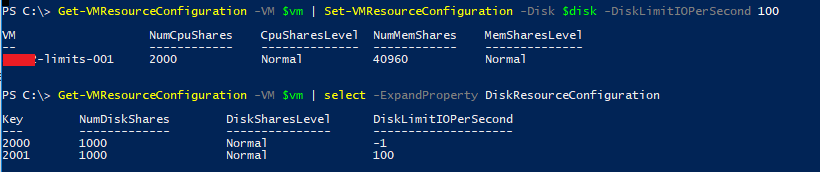

So if we want to set something on a disk we use the Set-VMResourceConfiguration and specify the disk and the wanted IOPS setting

I have created a quick wrapper for the Get-VMResourceconfiguration function to output the limit for a specific disk, which makes it a bit more friendly to use and a function for setting the limit

function Get-VMDiskLimit {

<#

Help text omitted....

#>

[cmdletbinding()]

param(

[Parameter(Mandatory=$true,ValueFromPipeline=$true,ParameterSetName="vmdk")]

[Alias("VMDK")]

[VMware.VimAutomation.ViCore.Types.V1.VirtualDevice.FlatHardDisk]

$Harddisk

)

$Harddisk.Parent | Get-VMResourceConfiguration | Select-Object -ExpandProperty DiskResourceConfiguration | Where-Object {$_.key -eq $Harddisk.ExtensionData.key} | Select-Object @{l="VM";e={$Harddisk.Parent.Name}},@{l="Label";e={$Harddisk.Name}},@{l="IOPSLimit";e={$_.DiskLimitIOPerSecond}}

}

function Set-VMDiskLimit {

<#

Help text omitted...

#>

[cmdletbinding()]

param(

[Parameter(Mandatory=$true,ValueFromPipeline=$true,ParameterSetName="vmdk")]

[Alias("VMDK")]

[VMware.VimAutomation.ViCore.Types.V1.VirtualDevice.FlatHardDisk]

$Harddisk,

[Parameter(Mandatory=$true)]

[Alias("Limit")]

[int]

$IOPSLimit

)

$result = Get-VMResourceConfiguration -VM $Harddisk.parent | Set-VMResourceConfiguration -Disk $Harddisk -DiskLimitIOPerSecond $IOPSLimit

$result.DiskResourceConfiguration | Where-Object {$_.key -eq $Harddisk.ExtensionData.Key}

}

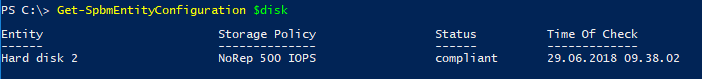

Storage Policies

So to have a source of the wanted IOPS value I mentioned we were using the name of the Storage Policy. So let's first check how we can retrieve the Storage Policy for a disk.

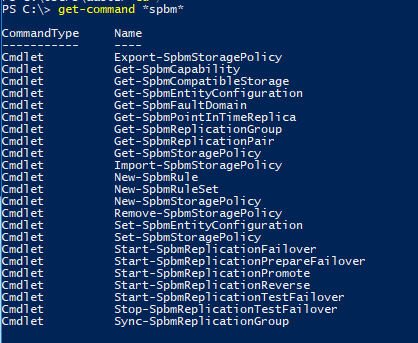

When working with Storage Policies we are using the -Spbm cmdlets. Spbm stands for Storage Policy based management and there's lots of configuration that can be done.

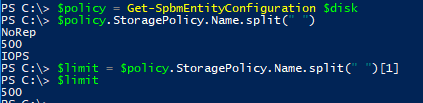

So, to check the policy for a disk we are using the Get-SpbmEntityConfiguration cmdlet

Now let's use the name of the policy to extract the expected limit.

At this point we know how to get and set the current limit, as well as a way to find the expected limit. With this we can create some logic to check if a disk has the expected limit, and change it if needed.

The script

One challenge when building out the script is that we potentially need to filter out only the disks that are expected to have a IOPS limit set. We could do this by having Storage policies as our starting point and retrieve only disks that have specific policies, but there is some bugs in how the retrieval of these disks work. Because of this we need to retrieve all disks and then check if the configured storage policy qualifies for limit checking.

If we find a disk that is configured with a policy we want to control limits for we will carry on with checking the current limits on that disk. If we find that the limit is not correct we will set the $set variable to $true.

$diskPolicy = Get-SpbmEntityConfiguration -HardDisk $disk

if($diskPolicy.StoragePolicy){

if($diskPolicy.StoragePolicy.Name -in $policies2check){

$set = $false

$expectedLimit = $diskPolicy.StoragePolicy.Name.Split(" ")[1]

$limit = Get-VMDiskLimit -Harddisk $disk | Select-Object -ExpandProperty IOPSLimit

if($limit -gt 0 -and $limit -ne $expectedLimit){

#Limit is set, but is not corresponding to expected limit

$set = $true

}

elseif($limit -eq $expectedlimit){

#Limit is set and corresponds to expected limit. Nothing to do...

}

else{

#Limit is not set or couldn't be retrieved!

$set = $true

}

}

}

Now, after checking limits we will carry on by correcting the limit if needed

#Policy and limits are checked. If the $set variable is $true we will carry on with changing the limit

if($set){

$result = Set-VMDiskLimit -Harddisk $disk -IOPSLimit $expectedLimit

$limitsSet++

#Do a check on the limit set

if($result.DiskLimitIOPerSecond -ne $expectedLimit){

#Limit is still not set to the expected value

}

}

These examples does not include any logging, and we could probably do some more error handling. The full script (with logging) can be found on GitHub.

Summary

This post has shown how we have automated the process of controlling disk i/o limits with the use of Storage policies in vSphere. Although we are using a naming convention on policies to extract the limit value and not an actual property you could choose to do this in other ways as I mentioned.

You might know that in 6.5 the new Storage I/O control could specify the limit in a policy, but this will limit IOPS only and not Bandwith which is what we see can get out of hand before IOPS. This has to do with the fact that IOs have different size and creates huge differences in the bandwidth usage. The "old" SIOC limit setting normalizes the IO to 32KB and thereby effectively also limit the bandwidth.

The script we have built can also be extended to perhaps set a default policy if none is found if that is desired in your environment.

Thanks for reading. Happy automating!