Limiting disk i/o in vSphere

As a Service provider we need to have some way of limiting individual VMs from utilizing too much of our shared resources.

When it comes to CPU and Memory this is rarely an issue as we try to not over-committing these resources, at least not the Memory. For CPU we closely monitor counters like CPU Ready and Latency to ensure that our VMs will have access to the resources they need.

For storage this can be more difficult. Where we usually have 50-60 VMs on a host we will probably have hundreds on a Storage Array (SAN). Of course the SAN should be spec'ed to handle the IOPS and Throughput you need, but you also need to balance the amount of disk space available and maybe most importantly, the cost. Add to this that storage utilization often will be intermittent and bursty hence even more difficult to plan and control.

In our world we would like to set a limit on a per VM basis when it comes to both IOPS and IO Throughput. Today you would think that this shouldn't be that difficult, but our experiences over the past couple of years proves us different.

Background

Both vSphere and our Storage Arrays comes with Disk QoS. The SAN, like most traditional SANs, does this on a per-LUN basis. This is of course not a solution in our case. There are storage solutions out there that works closer to the VM, but we have to find solutions with what we already have. In vSphere you specify a limit on a per-VMDK basis, which is better, but still not what we want, per-VM.

We have up until vSphere 6.5 update 1 used the old disk scheduler from before vSphere 5.5 which would aggregate the limits for all VMDKs on the same datastore as the limit for the whole VM and would let a single VMDK utilize all of that limit if the other VMDKs didn't need it.

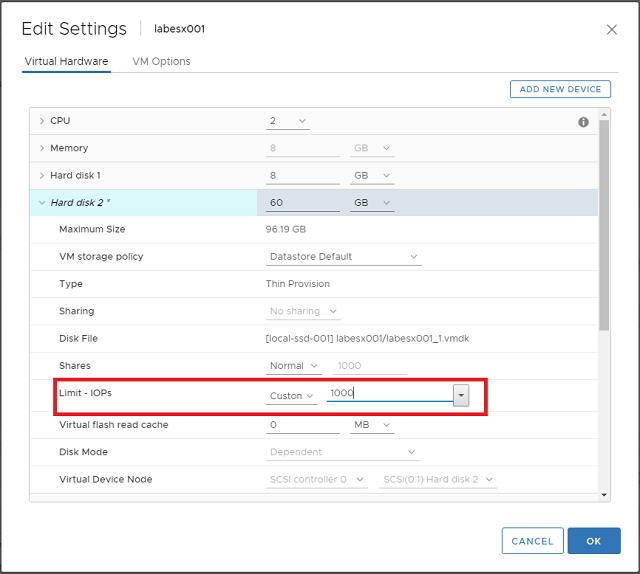

We set the limits through the configuration of the disks. The IOPS limits can be set through the "Edit settings" on the VMs Hard disks, while the IO Throughput, i.e the amount of MB/s, needs to be set through the vmx file by specifying a bandwidth cap on each disks SCSI id.

This worked great until we experienced several bugs which made the feature behave inconsistently.

Then when we had to upgrade to 6.5 U1 (partly because of Meltdown/Spectre patching) we found that VMware removed the limiting feature of that old scheduler. There's no actual statement about it in the release notes, but they fixed a different bug with setting limits which we think is related. Therefore we switched over to the newer mClock scheduler.

This scheduler will only control individual VMDKs, meaning that we lost the ability to do VM limiting. While this of course is a step back, we can live with this if the limiting feature is actually working. We see some situations where the scheduler are not enforcing the limits, but it's still more consistent than before.

We need to do some "redesign" on our VMs as we need to limit the number of disks per VM to come as close to a per-VM limit as possible. For new VMs this will not be much of a problem, but for existing ones we need to do some disk consolidation.

Another change with the mClock scheduler is that you now will only set the IOPS you want to limit to and the Throughput (MB/s) will be calculated from the IOPS. The actual calculation will be IOPS / 32 = MB/s limit. 32 comes from a normalized IO size of 32 KB.

Update: With the recent blog post from Duncan Epping we realized that the mClock scheduler actually does not normalize I/O size to 32 KB as I've described in this article. This means that we cannot control the MB/s throughput for disks. It also means that setting limits through Storage Policies has the same affect as the below examples

Setting limits

So, that was some background, now on to how you set the actual limit. As mentioned with the mClock scheduler you only set the IOPS limit and you can do this in multiple ways.

GUI

Through the GUI you control it via Edit settings on the VM

PowerCLI

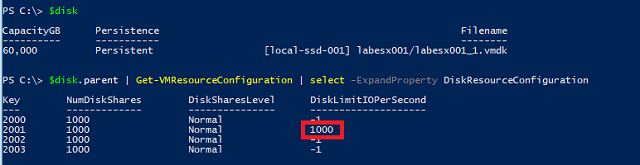

With PowerCLI you work with the *-VMResourceConfiguration cmdlets.

First of we can check the current limits. We are working with disks so I have put the disk I want to control in the $disk variable, but since the VMResourceConfiguration cmdlets needs a VM object we'll use the disk's parent to fetch resources and check the limits on all disks

We can see that we have a disk with the 1000 IOPS limit.

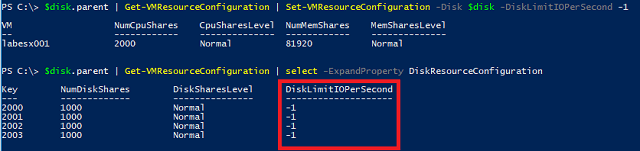

Now to set this back to unlimited we will use the Set-VMResourceConfiguration cmdlet, and specify the single disk. We will use -1 as a way of saying "Unlimited". Finally we will output the configuration for all disks to verify.

Other solutions

When we talk to VMware support about this they give us some different answers, all from our testing not being correct because we cannot use IOMeter to generate load etc, but the bottom line is that the answer to having per-VM limits is vSAN or VVOLs. While we are using both solutions today there are some caveats with those as well. VVOLs have had limited features on our storage arrays for a while when it comes to replication, but with 2.0 this is in place. While this is now supported, there are issues with... QoS. So VVOLs cannot be used at the moment.

vSAN is an interesting solution, but for us as a service provider it's not at the moment a viable solution due to heavy licensing costs as well as it would not help us utilizing the existing SANs.

Summary

While we do not have the per-VM limiting feature we can sort of live with the existing functionality if it's consistent and working. But we need to automate the setting of limits and this will be the focus in a following post.